Archive

SSAS: how to import data into SQL Engine from SSAS

This will show you how to import data from SSAS to SQL Engine.

Connect to SQL Engine and Right Click on database where you like to import data from SSAS – Let’s say in my case I have a test database

It will open Import Windows – Provide Data Source (Select Provider for Analysis Services – in my case I am using SQL 2008R2, so selected 10.0)

Hit Properties for providing SSAS Server and Database Details

Choose a Destination

So you have an option of Writing a query or select a table – I haven’t explored option of writing Query, so selected Select Table

Select Single or Multiple table – for demo purpose, I have selected Item as Source

Hit Preview – this will show you data which you are going to import

there is a gotcha, if you click on Edit Mapping you will find something strange –

Yes, you got it – its data types

So, you need to change the data types, something as shown below –

Click OK – > Next and Hit Finish

Some warnings – related to truncate of data, I stated Type as Varchar and Size 254 – so it’s just informing that I can import only that much data in a column,

so I can ignore this message, because I know that I have less than 254 chars in my Attribute / Column / Filed.

After import checked table and found – as I have very small result set, so I can confirm that imported successfully

Questions – If I lost my SQL Database (RDBMS), can I use this methodology to get my data back from the SSAS Database?

Answer – No, it may not give you complete data again depends on design of your Database, let’s say you have ROLAP Partitions (Fact) in case of ROLAP you will not able to import data because AS doesn’t store data in SSAS – and if RDBMS source is missing for ROLAP Partition, Select query will not work; for MOLAP you can do but as I said earlier design of your database may impact data import.

As you have seen earlier in Item Dimension, I have only two columns but if you check DSV in a SSAS Database you will find three columns – oops which means, I lost one column – yes you are right.

So why Col is missing during import – here is an explanation, if you notice in my Dimension Structure I have only two attributes, that explains why only two columns were visible when we were importing.

Second question is why we are getting Item Name, in Item ID column.

Item – is a key attribute but if you notice Properties of this Key Attribute you will find what’s going on

Notice here, Name Col is ItemName – that explains – why I can’t see ItemId Value when I have imported data; Instead of ID as integer value its showing Name of the Item – which is expected because I set name column property.

Like I told you earlier – for disaster recovery you can’t rely on such imports – you need to have your SQL / RDBMS Backups in Place.

PowerPivot–Enable Logging

For enabling Verbose Logging follow these steps –

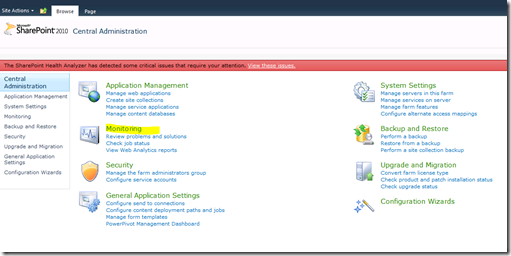

Go to Central Admin and Click on Monitoring

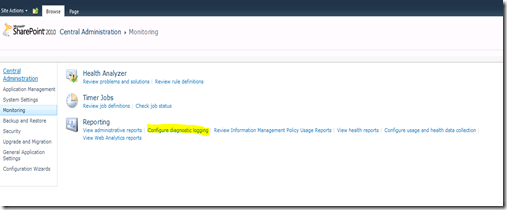

Click on Configure Diagnostic Logging

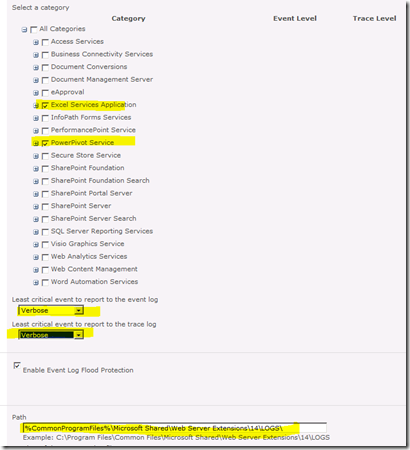

Enable Verbose Logging as shown below – Here I have enabled Logging for Excel and PowerPivot

Set Least Critical Event to report to the Event Log and Trace Log to Verbose as shown below and Click OK

Further Ref –

http://technet.microsoft.com/en-us/library/ee210681(v=sql.105).aspx

X========End of Article=======X

Dimension: ProcessUpdate – Insight

ProcessUpdate applies only to Dimension. It is the equivalent of incremental dimension processing in Analysis Services 2000. It sends SQL queries to read the entire dimension table and applies the changes—member updates, additions, deletions.

Since ProcessUpdate reads the entire dimension table, it begs the question, “How is it different from ProcessFull?” The difference is that ProcessUpdate does not discard the dimension storage contents. It applies the changes in a “smart” manner that preserves the fact data in dependent partitions. ProcessFull, on the other hand, does an implicit ProcessClear on all dependent partitions. ProcessUpdate is inherently slower than ProcessFull since it is doing additional work to apply the changes.

Depending on the nature of the changes in the dimension table, ProcessUpdate can affect dependent (related) partitions. If only new members were added, then the partitions are not affected. But if members were deleted or if member relationships changed (e.g., a Customer moved from Redmond to Seattle), then the aggregation data and bitmap indexes on the related partitions are dropped. The cube is still available for queries, albeit with lower performance.(with ProcessUpdate Flexible aggregations and indexes on related partitions will be dropped)

After ProcessUpdate you need to Process Indexes on the Partitions. The simple approach of dealing with such problem is to do default processing on associated Cubes, default processing will create dropped Aggregations and Bitmap indexes for affected partitions.

Three Options you can use for building Indexes / Aggregates –

Lazy processing is the mechanism by which the Analysis server automatically builds bitmap indexes and aggregations for dimensions and partitions. Lazy processing is done in the background at a low priority, so it does not interfere with foreground operations such as user queries. The advantage of lazy processing is that it reduces the foreground processing time and the cube is available earlier to users, albeit with lower performance.

2) ProcessAffectedObjects while Processing Dimension (Process Update) – that tells the server to reprocess other objects impacted by this command, benefit of this is All Affected Objects will be process in same Batch.

3) Process Index on Measure Groups or Process Default of a Cube

Scenario A: Update a Product Color (Attribute Key is Color) for a single Product which had a Historical Record in Year 2005 and 2006.

1) If you notice for this specific product got sales only in Year 2005 and 2006

select ProductKey,orderdatekey from testinternestsales

where Productkey=324

2) Created A Test Cube based on TestDate and TestProd-

TestDate –

TestProd

With Color – it got Flexible Relationship.

Four Partitions based on –

2005 WHERE OrderDateKey <= ‘20051231’

2006 WHERE OrderDateKey >= ‘20060101’ AND OrderDateKey <= ‘20061231’ and same was for 2007 / 2008

Dimension Usage

Key Col and Name Col is Color.

3)Auto-Slicing is correct, can be proved by simple query –

Select {[Measures].[Sales Amount]}on 0,[Testprod].[Product Key 1].&[324] on 1

from [Test] WHERE [Test Date].[Calendar Year].&[2005]

4) SELECT * FROM SYSTEMRESTRICTSCHEMA($SYSTEM.DISCOVER_PARTITION_DIMENSION_STAT

, CUBE_NAME = ‘TEST’ , DATABASE_NAME = ‘Adventure Works DW 2008R2’ ,MEASURE_GROUP_NAME = ‘Testinternestsales’

,PARTITION_NAME = ‘2005’)

Checking if Indexes are in Place, if you notice here For Color in all years Indexes are in Place.

5)Also checked in Folder

E:\data\Adventure Works DW 2008R2.0.db\TEST.40.cub\Testinternestsales.40.det\2008.37.prt

E:\data\Adventure Works DW 2008R2.0.db\TEST.40.cub\Testinternestsales.40.det\2005.36.prt

Colro.Map files are in place

6)Fired this query for updating Color for ProductID 324 (As shown earlier it got records only for 2005 and 2006 in Fact Table)

update testprod

set color=’Test Green’

where productkey=324

7) Process Update

Fired same DMV and if you notice Attribute Index for Color is dropped for 2005 as well as 2008

Scenario B: Update a Product Color (Attribute Key is Color) for a product which doesn’t have any record in fact table (No History or Current Record)

You can see all flex Aggregates

Changed – Color of a Product, which does not have any record in Fact table

After Process Update – all flex aggregates are gone only Index files are left that too for all attributes except Color

Scenario C In earlier mentioned Scenario – Color is Key Column, for testing I have changed the Key Col – ColorID and Name Col. Kept as Color.

Changed Color

update testprod set color=’ASAT’ where productkey=609

So in this case, I am updating a color but internally I am referring ColorID as Key Column for this Color Attribute. Which internally is not impacting Index Mapping and hence not dropping indexes / aggregates

If your design is somewhere near to Scenario 1 or 2, Indexes / Aggregates will get dropped but if you are following the practice given in Scenario 3, It will not drop indexes because it’s not impacting the Key Col (and that’s why it’s a suggested practice to use Name Col for Attributes).

Scenario D: Dropped a record from Dimension and shown an impact on Aggregates. as expected its dropped all Attribute Indexes (Map files) and Flex Aggregates

SELECT * FROM SYSTEMRESTRICTSCHEMA( $SYSTEM.DISCOVER_PARTITION_STAT , CUBE_NAME = ‘Test’, DATABASE_NAME = ‘Adventure Works DW 2008R2’ , MEASURE_GROUP_NAME = ‘Testinternestsales’ , PARTITION_NAME = ‘2005’)

Aggregates are present.

SELECT * FROM SYSTEMRESTRICTSCHEMA($SYSTEM.DISCOVER_PARTITION_DIMENSION_STAT

, CUBE_NAME = ‘TEST’ , DATABASE_NAME = ‘Adventure Works DW 2008R2’ ,MEASURE_GROUP_NAME = ‘Testinternestsales’

,PARTITION_NAME = ‘2005’)

Now fired below mentioned query for checking indexes

SELECT * FROM SYSTEMRESTRICTSCHEMA($SYSTEM.DISCOVER_PARTITION_DIMENSION_STAT

, CUBE_NAME = ‘TEST’ , DATABASE_NAME = ‘Adventure Works DW 2008R2’ ,MEASURE_GROUP_NAME = ‘Testinternestsales’

,PARTITION_NAME = ‘2005’)

ProductKey 611 doesn’t existing in Fact Table

This signify that we don’t have record for this guy

delete from testprod

where productkey=611

Now if you notice all flex aggs are dropped you can see size as 0

Indexes – for all attributes are dropped because we have deleted a record and it had impacted all Attributes

*———–End of Article—————-*

![clip_image001[6] clip_image001[6]](https://karanspeaks.com/wp-content/uploads/2012/05/clip_image0016_thumb.png?w=428&h=376)

![clip_image002[6] clip_image002[6]](https://karanspeaks.com/wp-content/uploads/2012/05/clip_image0026_thumb.jpg?w=563&h=79)

![clip_image003[6] clip_image003[6]](https://karanspeaks.com/wp-content/uploads/2012/05/clip_image0036_thumb.png?w=527&h=314)

![clip_image004[6] clip_image004[6]](https://karanspeaks.com/wp-content/uploads/2012/05/clip_image0046_thumb.png?w=563&h=52)

![clip_image005[6] clip_image005[6]](https://karanspeaks.com/wp-content/uploads/2012/05/clip_image0056_thumb.png?w=550&h=148)

![clip_image002[11] clip_image002[11]](https://karanspeaks.com/wp-content/uploads/2012/05/clip_image00211_thumb.jpg?w=493&h=285)

![clip_image001[10] clip_image001[10]](https://karanspeaks.com/wp-content/uploads/2012/05/clip_image00110_thumb.png?w=268&h=284)

![clip_image002[13] clip_image002[13]](https://karanspeaks.com/wp-content/uploads/2012/05/clip_image00213_thumb.jpg?w=244&h=94)

![clip_image004[9] clip_image004[9]](https://karanspeaks.com/wp-content/uploads/2012/05/clip_image0049_thumb.jpg?w=453&h=137)